Evaluating Afterschool Programming During COVID-19: Making a Plan for Uncharted Territories

Written By: Brittany Hite

Project Team: Dr. Tiffany Berry (PI), Brittany Hite (PM), Evangelia Theodorou (RA), and Emma Cohen (RA)

Evaluation Project: Montebello Unified School District’s Extended Learning Opportunities

COVID -19 has impacted almost every aspect of life – including our ability to evaluate after-school programs. As 2020 unfolded, people around the world were impacted by the coronavirus pandemic, also termed COVID-19. This virus swept the nation, forcing everything but essential businesses to shut down. Montebello Unified School District’s Extended Learning Opportunity (MUSD’s ELO) was among the many programs affected, as youth were no longer allowed to attend in-person programming. While three-quarters of programs across the country underwent lay-offs, re-assignments, and furloughs, MUSD’s ELO realized youth needed staff and programming more than ever during this tumultuous pandemic. Thus, MUSD’s ELO leadership and program staff came together to adapt programming into an online format to continue providing emotional support, enrichment opportunities, and homework help for the thousands of youth at their sites. In this blog, I will discuss the important pivots our team employed while continuing to evaluate after-school programs during this tumultuous and ever-changing time.

YDEval has been collaborating with MUSD’s ELO for the past nine years to conduct multi-site evaluations across 25 sites in Montebello, CA. Due to COVID-19, MUSD’s ELO moved to an online programming format in a two-pronged manner: (1) Google Classroom to upload resources and activities and (2) phone call check-ins with youth and families. Although all sites had a Google Classroom and engaged in phone call check-ins, sites varied in their implementation of online programming with some sites conducting video calls with individual or groups of youth, some sites creating and uploading videos of themselves leading activities for youth to Google Classroom, and other sites just posting district resources or videos they found online onto the Google Classroom.

YDEval partnered with MUSD’s ELO to evaluate the transition to online programming. This evaluation elucidated key strengths, a few remaining needs, and best practices for transitioning to and providing online programming. Given the ever-changing context, YDEval collaboratively developed a staff survey and focus group questions to ensure both measures were relevant and responsive to MUSD’s ELO’s context and needs. In May and June 2020, 150 frontline staff and site managers responded to the staff survey. Additionally, over 30 staff from 6 sites participated in meaningful focus group discussions. Best practices for after-school virtual programming during COVID-19 that were derived from this evaluation are discussed in another blog post.

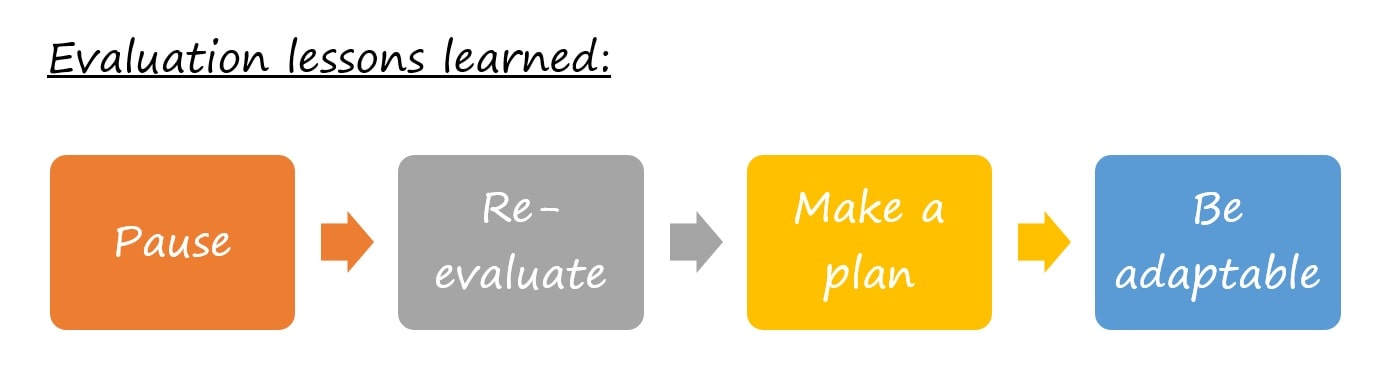

PAUSE

This time is as crazy for evaluators as it is for everyone else in the world. Allow yourself the right to be a human during these times just like everyone else. Along with that, keep in mind the importance of: pausing and taking some deep breaths, being gracious with yourself, and practicing self-care by taking needed, intentional breaks. The most effective plan will not come out of a rushed, reactionary effort but out of a calm, thoughtful one.

RE-EVALUATE

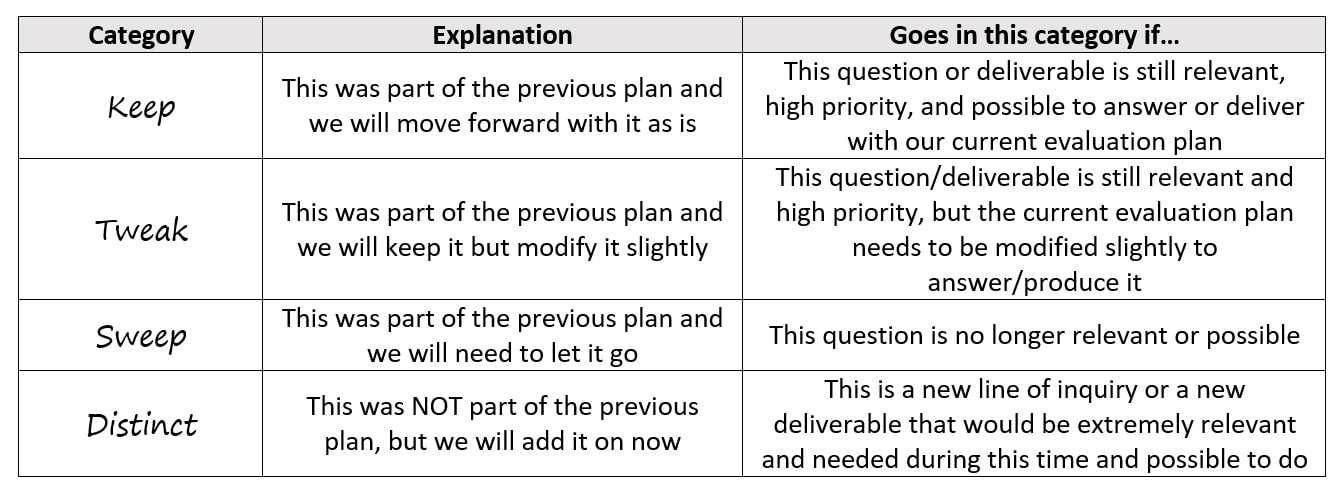

Once you’ve gathered and centered yourself, you’ll want to re-evaluate your current evaluation plan through collaborative conversations with your stakeholders. Throughout this conversation, you will want to keep four main categories in mind: keeps, tweaks, sweeps, and distincts (see the table below for an explanation of each).

By thinking through each of these four categories (i.e., keeps, tweaks, sweeps, and distincts), you and your evaluation stakeholders will be able to identify what things in your current plan should stay or be modified, what things in your current plan should be removed, and what things are not in your current plan that should be

As you are thinking through what to keep, what to tweak, what to sweep, and potential distinct things to add, it is also important to think about these three questions:

(1) Has your evaluation budget remained the same or grown?

If your evaluation budget has remained the same: it is helpful to keep the number of things you ‘sweep’ (i.e., remove from the evaluation completely) and the amount of ‘distinct’ things (i.e., completely new additions to the evaluation) fairly on par so that anything you take out of the budget you replace with something that is equivalent financially but more needed and relevant to the current situation. For example, in our evaluation, we had two youth surveys (one for middle school and one for high school) that had been previously created, programmed, and used. Thus, we traded both of those surveys out for an entirely new staff survey that had to be created and programmed from scratch to be able to gain insights into staff perceptions of the transition to virtual programming.

If your evaluation budget has diminished: it is helpful to focus your keeps on the HIGHEST priority (i.e., only keep things in the evaluation plan that are going to be the most useful, most responsive, and most actionable). Additionally, you can try to focus on tweaking and adapting current evaluation plan tasks into tasks that will yield similar data but in a more cost-effective way. Finally, be liberal with your sweeps (i.e., only keep things that are need-to-haves and remove anything that could be identified as a nice-to-have) and conservative with adding distinct things to the plan.

(2) What are your stakeholders’ needs?

It would be unwise to try to go through the process of re-evaluating the evaluation plan independently. Instead, collaboratively decide these things with the program leadership team and key stakeholders to ensure you are being responsive to their needs (especially during this unprecedented time with so many unforeseen needs constantly emerging). Additionally, evaluations should likely at least have one question focusing on a needs assessment of participants during this time. Stakeholder needs are changing on a weekly basis. So to assume that the needs of stakeholders are either the same as before COVID-19 or will remain constant after checking in at one point in time is rather silly lol.

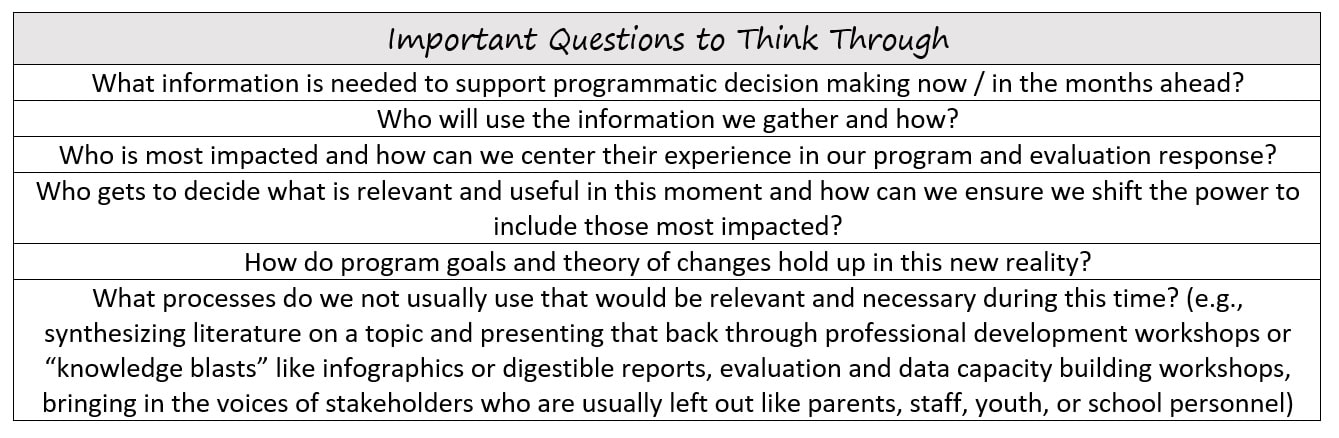

(3) What questions should be guiding your decision-making?

We have been granted the beautiful opportunity to pause in this hectic work-life we call evaluation and re-evaluate how we are doing things and how we should be doing things to best meet the current challenges and to enhance the field of evaluation as a whole. Use this time to sit with your team and program stakeholders and make sure you think through these questions:

MAKE A PLAN

(1) Do not forget the basics

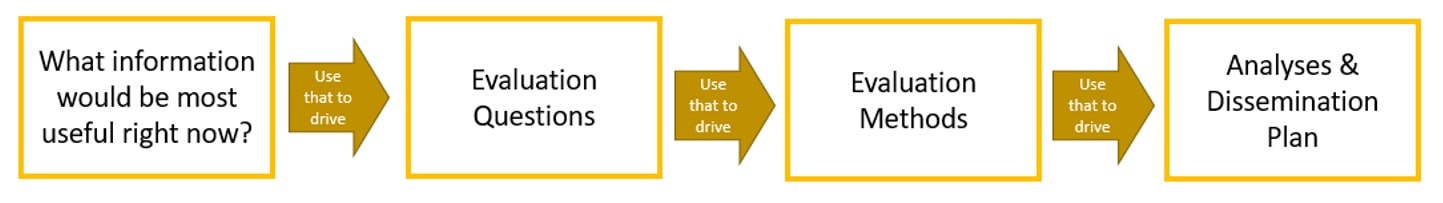

Even though this time is so new and different, it is important to stick to the basics of evaluation. When you conduct an evaluation, it is important to lead with intended use. So, start from the basics and re-take stock on what is really important right now – what information would be most useful for this organization to know? Once you gather the intended use, you can use that to lead your evaluation questions, methods to answer those questions, and dissemination plan.

(2) Context is key!

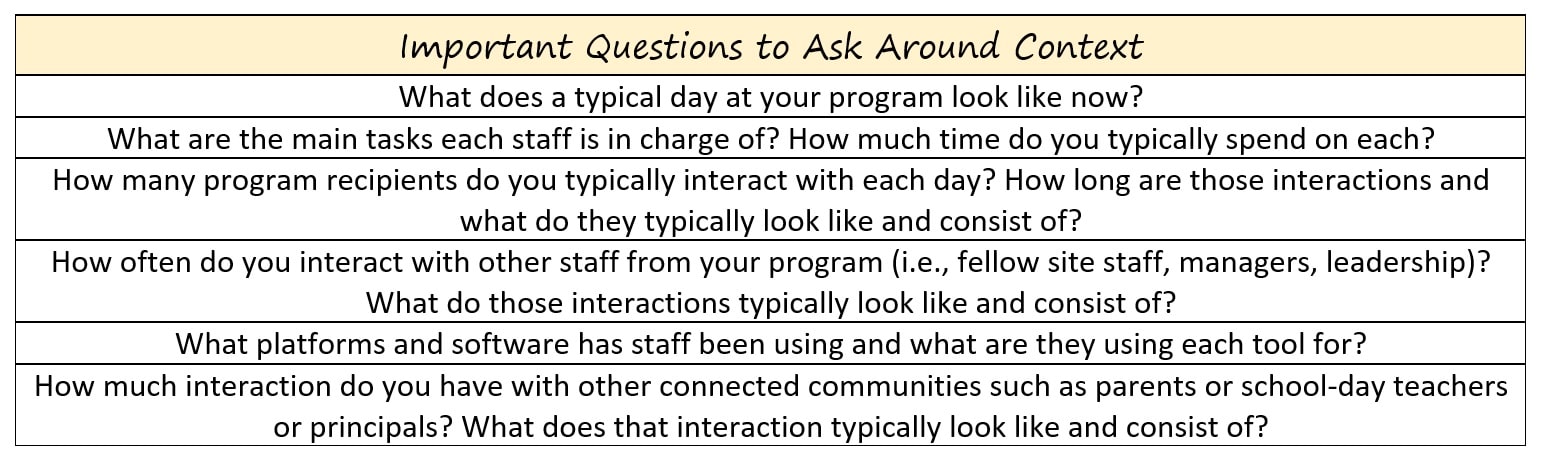

The program setting has likely vastly changed (and is continuously changing). The only way your evaluation will be remotely close to being helpful and useful is to ensure you are taking context into consideration. Collaboration with stakeholders at all levels of the program (i.e., leadership, managers, frontline staff, and program recipients) will help to ensure the evaluation is being responsive to the current needs and the shifting context. Without a thorough understanding of context, evaluators could be wasting precious program resources and participant time trying to collect data that ends up not being relevant, responsive, or useful.

(3) Innovation station

It is important now more than ever to think of innovative ways to collect data, innovative ways to conceptualize key concepts in our fields, and innovative deliverables that will provide useful and responsive information.

Given the constraints of COVID-19, gathering data electronically seems even more urgent than it did before. Some ideas of innovative ways to collect data from program recipients and program stakeholders through technology could include participant vlogs (i.e., video blogs), ESM apps (i.e., experience sampling method applications), daily journals or diaries, video call focus groups, arts-based data collection, etc.

An especially important question to be asking ourselves during this time is ‘how can we collect data in ways that respect and honor participants’ current emotional, mental, fiscal, and physical situations?’. Program recipients, program staff, and even program evaluators have all dealt with some level of trauma throughout this pandemic, and we should be especially cognizant of trying to create innovative ways that allow us to collect data in a way that does not trigger previous trauma and perhaps even helps to create a healing environment for those traumatic events.

Within after-school program evaluations, evaluations tend to focus on attendance, engagement, implementation fidelity, program quality, satisfaction, and outcomes. Now in this new context, it is important to think about how these concepts have shifted. Additionally, we need to be thinking of innovative ways of capturing and understanding these concepts. For example, engagement may now include the number of comments youth left on a video on Google Classroom or how many youth were participating in the chat on zoom.

Finally, frequent data collection and reports may not be the most beneficial thing for stakeholders at this time. It is also important to think about how we, as evaluators, can use the other skills we have to build staff capacity, conduct professional development workshops and trainings, synthesize and translate extant research into digestible and actionable information for staff and programs, and set up systems to prepare for when things go back to functioning in an in-person capacity.

BE ADAPTABLE

(1) Prioritize your stakeholders’ needs and be explicit about that

These programs are delivering on the ground services to youth and families that need their support. Sometimes, the best way to support these programs is to let them know you are ready to adapt and be as flexible as possible to constantly meet their needs even if that means waiting for lengthy periods of time and then acting extremely quickly once a decision is made.

(2) Be ready to roll with the punches

At the end of the day, you can and should try to plan and prepare as much as possible while consistently realizing that things are ever-changing. Thus, it is important to be flexible with literally everything. The evaluation questions may become irrelevant quickly and need to be revised. The data collection methods may be rendered unfeasible for a number of reasons a week before you were planning on launching them. Figure out simple ways to pivot. The deliverable timeline may need to be quicker or postponed depending on the moment-by-moment decisions made by local, state, and federal governing bodies. Be prepared to have to ramp up the timeline and deliver something quicker even if it’s just “good enough” rather than the highest quality product you’ve delivered. Similarly, be prepared to have to postpone or completely change the format, audience, or information included in a deliverable if needs change rapidly.

Resources:

- The Forum for Youth Investment discusses “Practical Solutions to Adapt Research and Evaluation Plans During COVID-19”

- Better Evaluation discusses “Adapting evaluations in the time of COVID-19”

- American Evaluation Association blog AEA365 has posts such as “Three Lessons for Evaluators Amid COVID-19”